Deploying OpenClaw on AWS Without the Security Disasters

- Oleksandr Kuzminskyi

- March 9, 2026

Table of Contents

OpenClaw is everywhere right now. 247,000 GitHub stars, an AWS Lightsail blueprint, people running autonomous AI agents from their phones via WhatsApp. It’s legitimately impressive - an open-source agent that can manage your email, execute shell commands, browse the web, and remember context across sessions.

It’s also, in its default configuration, a security incident waiting to happen.

Bitsight found over 30,000 exposed OpenClaw instances in a two-week scan. Cisco’s security team tested a third-party skill and found it exfiltrating data via prompt injection. A one-click RCE vulnerability (CVE-2026-25253) let attackers leak tokens and execute arbitrary commands through a malicious link. The AWS Lightsail blueprint shipped with 31 unpatched security updates on day one.

OpenClaw’s own maintainer put it bluntly: “if you can’t understand how to run a command line, this is far too dangerous of a project for you to use safely.”

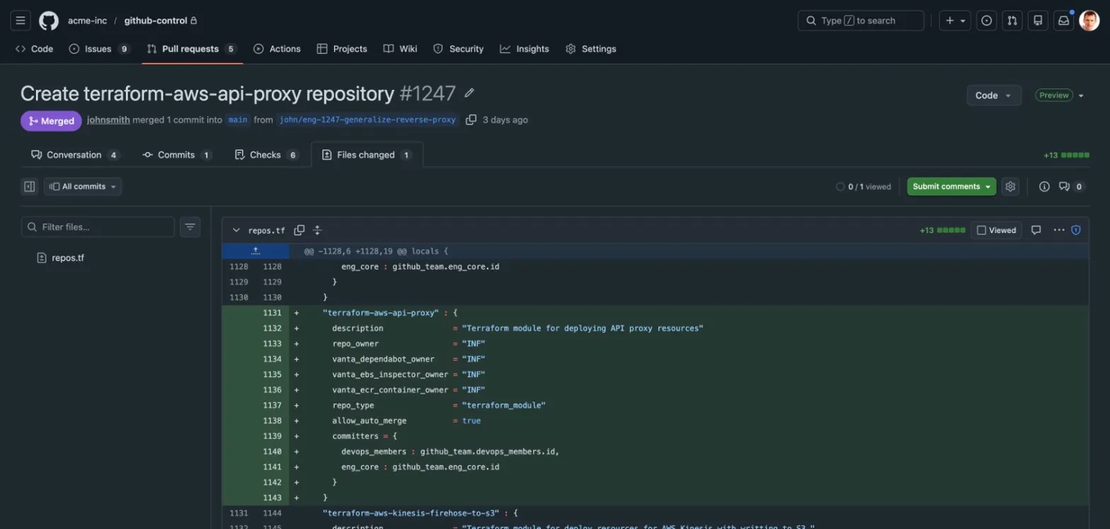

We wanted to run OpenClaw on AWS. But we wanted to do it properly - behind real authentication, with supply chain controls, filesystem isolation, encrypted secrets, and proper logging. So we built terraform-aws-openclaw, a Terraform module that replaces the default deployment patterns with production-grade infrastructure.

This post walks through the specific security problems we found and what we did about each one.

The Authentication Problem

OpenClaw’s default auth is a shared gateway token. Every client - browser, CLI, messaging app - presents the same secret over a WebSocket connection. This works fine when you’re running it on your laptop and you’re the only user. It falls apart the moment you put it behind a load balancer:

- The browser UI has no mechanism to obtain the token automatically.

- Embedding the token in the page would defeat the purpose of having one.

- There’s no concept of per-user identity - everyone shares the same credential.

The Lightsail blueprint inherits this model. AWS recommends rotating the token frequently and never exposing the gateway to the internet, but the blueprint is a publicly accessible instance with a single shared secret.

Our fix: Cognito + ALB + trusted-proxy

We replaced the entire auth model. Instead of a gateway token, we use AWS Cognito at the ALB layer with

OpenClaw configured in trusted-proxy mode - the same Cognito + ALB pattern we used for our

self-hosted Terraform registry:

Browser → ALB (Cognito OIDC) → EC2 (OpenClaw, trusted-proxy)

Cognito handles login, password policy, optional MFA, and session management. The ALB enforces authentication

before any traffic reaches the instance. OpenClaw reads the user identity from the x-amzn-oidc-identity

header set by the ALB.

The trust boundary is enforced in two ways. First, security groups restrict backend EC2 ingress to only the

ALB - no direct access to port 5173 from anywhere else. Second, OpenClaw’s trustedProxies configuration

is populated automatically from the ALB subnet CIDRs, so it only accepts proxy headers from addresses

that belong to the load balancer subnets. No hardcoded IPs.

This gives us per-user identity, MFA support, no shared secrets, and the ALB handles session cookies - all without modifying OpenClaw’s code.

The Supply Chain Problem

The default OpenClaw setup guide and most community tutorials use three separate curl | sh patterns:

Node.js: curl -fsSL https://deb.nodesource.com/setup_22.x | bash -

Ollama: curl -fsSL https://ollama.com/install.sh | sh

OpenClaw: npm install -g openclaw (as root, writing to /usr/lib/node_modules)

Each one downloads and executes arbitrary code as root. If any of these URLs were compromised - a DNS hijack, a CDN poisoning, a compromised maintainer account - you’d be running attacker code with full system privileges on a machine that has access to your email, calendar, shell, and LLM API keys.

Our fix: verified packages and unprivileged installs

We replaced each one:

Node.js is installed via APT with GPG key verification. The NodeSource repository is added through

cloud-init’s extra_repos directive with the signing key fingerprint pinned. APT will refuse packages that

don’t match the key.

Ollama is installed from the official release tarball - a direct binary download, not a shell script.

We extract the binary, install it to /usr/local/bin, and write the systemd unit inline. No arbitrary

script execution.

OpenClaw is installed as a local npm package under the dedicated openclaw user - not globally as root.

The binary lives at /home/openclaw/openclaw-app/node_modules/.bin/openclaw. The openclaw user cannot

write to system directories.

The Filesystem Isolation Problem

OpenClaw’s default systemd configuration uses ProtectHome=read-only, which makes all home directories

visible to the service (just not writable). On a shared system, this means OpenClaw can read files from

other users’ home directories.

Our fix: tmpfs + BindPaths sandboxing

We use ProtectHome=tmpfs combined with BindPaths=/home/openclaw. This hides all home directories behind

an empty tmpfs mount, then selectively exposes only the OpenClaw user’s home directory into the namespace.

Combined with ProtectSystem=strict, NoNewPrivileges=true, and PrivateTmp=true, the service runs in

a tightly constrained sandbox.

Ollama gets the same treatment - its own systemd unit, its own dedicated user, its own hardened directives.

The Secrets Problem

Many guides show API keys hardcoded in openclaw.json or passed as environment variables in the systemd

unit file. Both end up in plaintext on disk.

Our fix: Secrets Manager + KMS

API keys for Anthropic, OpenAI, and other providers are stored in AWS Secrets Manager, encrypted with KMS

via the infrahouse/secret/aws module. The instance role has secretsmanager:GetSecretValue permission

scoped to the specific secret ARN - nothing else.

At boot, the setup script reads the secret and writes an environment file that the systemd service loads. If the secret hasn’t been populated yet, the service starts without those providers and logs a warning. No crash, no plaintext fallback.

The Persistence Problem

This one isn’t strictly a security issue, but it matters for operational security. OpenClaw stores its

configuration, agent memory, and conversation history in ~/.openclaw/. On a bare EC2 instance, if the

instance gets replaced - AMI rotation, ASG health check, spot termination - all of that data is gone.

In a Lightsail blueprint, there’s no ASG, so you’re running a single instance with no automatic recovery and no data persistence beyond the instance lifecycle.

Our fix: encrypted EFS with backups

The module mounts an encrypted EFS filesystem at /home/openclaw/.openclaw. Agent data, configuration,

and conversation history survive instance replacement. EFS backups are enabled by default.

The configuration management uses a deep-merge strategy: Terraform manages infrastructure keys (auth mode, trusted proxies, model providers), while operational settings changed through the OpenClaw UI persist on EFS across instance replacements. Neither side overwrites the other.

The Logging Problem

For any system with ISO 27001 or SOC 2 requirements, you need audit logs with defined retention. OpenClaw’s default setup logs to journald on the instance - if the instance is gone, the logs are gone.

Our fix: CloudWatch with KMS encryption

A CloudWatch log group with 365-day retention receives journald logs from both openclaw.service and

ollama.service. The log group is encrypted with a dedicated KMS key. The CloudWatch agent filters and

forwards logs automatically.

The Full Picture

Here’s a summary of what we changed from the default OpenClaw setup:

| Area | Default guide | Our approach |

|---|---|---|

| Authentication | Shared gateway token | Cognito + ALB + trusted-proxy |

| Node.js install | curl | bash | APT repo with GPG key verification |

| Ollama install | curl | sh | Direct binary tarball |

| OpenClaw install | npm install -g (root) | Local npm install (unprivileged user) |

| Systemd ProtectHome | read-only | tmpfs + BindPaths |

| Secrets | Plaintext in config | Secrets Manager + KMS |

| Persistence | Instance root volume | Encrypted EFS with backups |

| Logging | journald on instance | CloudWatch, 365-day retention, KMS |

| Gateway bind | Loopback | LAN + trusted proxy CIDRs (auto-derived) |

Using the Module

The module is on the Terraform Registry and GitHub. A minimal deployment:

module "openclaw" {

source = "registry.infrahouse.com/infrahouse/openclaw/aws"

version = "0.2.0"

providers = {

aws = aws

aws.dns = aws

}

environment = "production"

zone_id = aws_route53_zone.example.zone_id

alb_subnet_ids = module.network.subnet_public_ids

backend_subnet_ids = module.network.subnet_private_ids

alarm_emails = ["ops@example.com"]

cognito_users = [

{

email = "admin@example.com"

full_name = "Admin User"

},

]

}

It works out of the box - AWS Bedrock with Amazon Nova 2 Lite is the default LLM provider, no API keys required. You can add Anthropic, OpenAI, or local Ollama models at any time by populating the Secrets Manager secret or configuring models through the OpenClaw UI.

The security considerations doc covers every decision in detail, including the ALB header trust model, WebSocket origin control, and Cognito hardening options.

OpenClaw is a powerful tool. It deserves infrastructure that takes its power seriously.